Mathematician Kevin Buzzard of Imperial School London is coaching computer systems the way to show one of the well-known issues in math historical past: Fermat’s final theorem.

Resolving the issue isn’t the purpose. There’s already an accepted proof that was finalized in 1998. That work is a tortuous maze of arithmetic that fills about 130 pages over two papers. It spans mathematical fields and unites summary concepts that beforehand appeared to have little to say to 1 one other. To know the proof is to know a large swath of arithmetic. Sooner or later, Buzzard says, a pc program that may confirm one thing so sprawling will have the ability to assist mathematicians discover, scrutinize and resolve a variety of issues.

For years, Buzzard and a handful of mathematicians have been engaged on tasks like this to formalize arithmetic. Traditionally, formalization has concerned expressing mathematical concepts as exactly as potential, erasing all ambiguity. Immediately, meaning translating definitions and theorems into laptop code so {that a} specialised program can confirm each painstaking step.

Formalization “is a brand new paradigm for mathematical proof writing that basically calls for the proof author be far more rigorous than common,” says mathematician Emily Riehl of Johns Hopkins College. “The pc shouldn’t be actually filling within the particulars.” The one who is writing the proof has to do this as a substitute.

However formalizing the proof of Fermat’s final theorem is simply the cornerstone of a fair bigger imaginative and prescient: to construct a digital library of all of arithmetic that can allow computer systems to be helpful assistants to mathematicians.

Even now, most mathematicians write proofs that depend on spoken or written descriptions and instinct, conventional instruments that till lately appeared out of the attain of computer systems. As such, fashionable formalization has lengthy been a distinct segment effort as a result of it requires expressing mathematical concepts as code.

Now, the explosion in synthetic intelligence has propelled efforts, spearheaded by know-how corporations, to mix giant language fashions with theorem provers to develop techniques able to autoformalization. In principle, such techniques might finally have the ability to do issues that people can’t.

That’s a divisive aim, and one which troubles many mathematicians for the way it may reshape mathematical analysis and progress. What started as a philosophical query — What’s the most precision potential in a mathematical proof? — has now change into an existential one: Will the search for precision upend the sector?

“We’re actually on the cusp of a change,” says Patrick Shafto, a mathematician and laptop scientist at Rutgers College in Newark, N.J., and at DARPA, a analysis and improvement company throughout the U.S. Division of Protection.

“Arithmetic is now principally practiced at a board, because it was 100 years in the past. However I feel in 5 years, it is extremely doubtless that each single younger mathematician makes use of AI,” Shafto says. “Advances in AI and formalization have the potential of actually highlighting the attention-grabbing features of being human and our quest for information, as people.”

My robotic assistant

AI might have acted like an accelerant thrown on the fires of formalization, however the concept of utilizing a machine for mathematical proofs isn’t new. In 1956, researchers on the RAND company launched a pc program (they known as it a “logic principle machine”) that checked proofs printed in Principia Mathematica, a landmark sequence of books by mathematicians Bertrand Russell and Alfred North Whitehead.

“I’m delighted to know that Principia Mathematica can now be executed by equipment,” Russell wrote in a letter to Herbert Simon, one of many researchers behind the pondering machine. “I want Whitehead and I had recognized of this chance earlier than we each wasted 10 years doing it by hand.”

Despite the fact that the follow shouldn’t be widespread, some mathematicians have used laptop packages known as interactive theorem provers in the previous few a long time to confirm current mathematical proofs. In 1998, mathematician Thomas Hales introduced that he and his scholar Samuel Ferguson had used a pc to show the Kepler conjecture, an announcement in regards to the optimum method to stack spheres that was initially posed by Johannes Kepler within the seventeenth century.

The proof met some resistance from different mathematicians, who argued that as a result of the pc had churned by way of so many monumental, sophisticated calculations representing all potential configurations of stacked spheres, people couldn’t test the accuracy of the solutions, and subsequently couldn’t confirm the reasoning. So from 2003 to 2014, Hales used digital assistants to formalize and confirm his personal proof.

In February, by combining AI with an interactive theorem prover, Ukrainian mathematician Maryna Viazovska and others completed formalizing proofs of the Kepler conjecture in eight and 24 dimensions — digital variations of labor that had earned Viazovska a Fields Medal in 2022.

Buzzard’s journey with formalization started in 2017 with a type of mathematical midlife disaster. He had simply reviewed a paper for publication in a math journal and, after a prolonged trade with the paper’s creator, couldn’t decide whether or not the argument was rigorous.

That frustration led him to suppose broadly in regards to the state of arithmetic — and what he thought it may very well be. “And I obtained fairly sad with the state of issues,” he mentioned throughout a chat in September. He started questioning: Might know-how take the guesswork out of verifying math? In any case, mathematicians don’t get into the sector as a result of they need to test beneath the hood of different proofs; they need to do one thing new. If verification may very well be offloaded to a machine, why not?

Buzzard started studying the way to use Lean, which is each a programming language and an interactive theorem prover. Lean first appeared in 2013, the brainchild of Leo de Moura, a pc scientist at Microsoft, who designed it as a method to confirm mathematical arguments, particularly in laptop code. Lean is similar theorem prover used to formalize Viazovska’s proof in February.

The extra Buzzard discovered, the extra excited he obtained. He started to see formalization because the act of digitizing arithmetic, which in flip would modernize the way in which that mathematicians use machines. He likens it to the digitalization of music. When music corporations started promoting CDs, Buzzard says, he at first dismissed the know-how as a method to pressure listeners to re-buy music they already owned. Then he realized that CDs allowed folks to entry, share and work together with music in methods beforehand inconceivable, a change amplified by the appearance of streaming providers.

“Digitizing music has fully turned the world of music on its head,” Buzzard says. “If we digitize arithmetic, possibly in some unspecified time in the future it should flip math on its head.” He appeared again at his personal schooling, and the way he taught math, and realized folks had been studying the topic in the identical means for the final century. It was time to modernize.

And Buzzard determined to start out with a centuries-old equation that was, till lately, essentially the most well-known unsolved downside in math.

An enormous thriller in a tiny margin

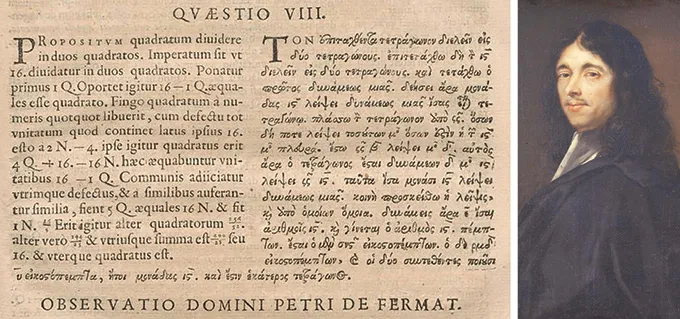

Based on legend, in or round 1637, French mathematician Pierre de Fermat scribbled an issue and a be aware in a replica of Arithmetica, a e-book by third-century Greek mathematician Diophantus. The issue includes this equation: an + bn = cn. If n = 2, then we all know there are infinitely many options. That’s as a result of in that case, the equation turns into the Pythagorean theorem and a, b and c correspond to the facet lengths of proper triangles.

Fermat acknowledged that there aren’t any entire numbers for a, b and c that may resolve this equation if n is larger than 2. Subsequent to the issue, Fermat wrote in Latin: “I’ve a very marvelous demonstration of this proposition that this margin is just too slender to comprise.”

Fermat’s son found the e-book and the be aware, however not till after his father’s dying. The concept was straightforward to state and laborious to show, and Fermat’s lacking proof vexed mathematicians for hundreds of years. Nobody ever discovered his “really marvelous” argument, and no mathematician ever conjured a proof that may remotely match that description. Some query whether or not it ever existed, or conjecture that no matter proof Fermat had in thoughts was fatally flawed. It’s tempting to view Fermat’s assertion as a sensible joke with terribly lengthy legs.

British mathematician Andrew Wiles lastly cracked it within the late twentieth century and later collaborated with mathematician Richard Taylor to finalize it. Their proof used arcane, far-reaching mathematical ideas that weren’t round within the seventeenth century, concepts that bridge mathematical fields that when appeared unconnected.

Over centuries, by probing Fermat’s easy downside mathematicians have made monumental breakthroughs in lots of fields past quantity principle, the sector most carefully related to the unique downside. In one of the vital, German mathematician Ernst Kummer proved in 1847 that the theory held for the common primes — a subset of prime numbers. He did so by creating concepts that laid the groundwork for a brand new subject known as algebraic quantity principle.

In 2023, with help from the U.Okay.’s Engineering and Bodily Sciences Analysis Council, Buzzard launched his formalization challenge with Fermat’s final theorem partly due to the proof’s measurement and significance, and partly as a result of a lot of his colleagues at Imperial School London are exploring concepts used within the proof. He knew it might be a Herculean, messy job to encode each definition and lemma — akin to a mini-theorem embedded in a bigger proof — that performs some position within the total scheme. And it’s been a rocky highway. “I’m type of everywhere, and I’ve had some failed begins,” he says.

He’s not toiling alone. At first, Buzzard says, about 30 folks have been contributing to his formalization effort by writing code for Lean, all of them acquainted names and faces. Many extra have reached out with concepts or in any other case tried to hitch the trouble, he says, and simply over 60 have had their coded contributions verified and accepted. Nonetheless, the challenge has grown into an interdisciplinary collaboration on a scale that Buzzard couldn’t have imagined. Nameless quantity theorists are reaching out with concepts, he says. Final August, he says, he went tenting at a music competition for per week and returned to search out 7,000 unread messages about numerous features of the proof.

In January, the trouble reached one among its first main milestones. “We proved {that a} sure factor was finite,” paving the way in which for the subsequent step, Buzzard says. The trouble required for that milestone, nevertheless, has led him to doubt whether or not they’ll end in his focused timeline of 5 years.

One of many largest challenges, Buzzard says, is determining the way to shortly construct Lean’s library of mathematical information. This can be a bottleneck for AI functions in math, too. “On this entire space of AI for math is that there’s a horrible lack of attention-grabbing datasets,” he says.

In a separate challenge funded by Renaissance Philanthropy, Buzzard and Rutgers mathematician Alex Kontorovich are additional contributing to Lean’s library — and increasing its applicability — by formalizing issues from an inventory of latest, notably thorny theorems representing the slicing fringe of arithmetic within the twenty first century.

The implications attain far past Buzzard’s tasks. An increasing quantity of mathematical information may allow working mathematicians — in the event that they have been so inclined — to search out fault strains in new proofs, or decide whether or not sure conjectures may maintain up. Referees and editors who evaluation papers for journals can be free to concentrate on the massive concepts behind submitted papers reasonably than the excruciatingly advantageous particulars of the logic behind the proof.

“That’s recreation altering,” Riehl says. “Proofs are laborious, and the papers are already very lengthy.” Errors can slip by way of.

A theorem prover with entry to a sturdy library of mathematical information may very well be used to determine hallucinations and different errors in mathematical proofs generated by AI packages. Having a proof be 95 % appropriate, in spite of everything, might imply the proof isn’t appropriate in any respect. “One hallucination can break a whole mathematical argument as a result of that’s the character of arithmetic,” Buzzard says.

For that cause, tech corporations have been creating packages that mix AI instruments like Google’s Gemini or OpenAI’s ChatGPT with the fact-checking rigor of Lean. So has the U.S. authorities: In early 2025, DARPA launched a program known as Exponentiating Arithmetic, or expMath, with the aim of utilizing AI to speed up the speed of mathematical discovery, primarily by offloading the finer particulars of establishing a proof.

All of those efforts tie straight right into a extra controversial and shortly evolving difficulty going through arithmetic at the moment: determining how AI goes to alter the sector, and whether or not the AI math invasion is an efficient factor.

A rising AI specter

The issue with giant language fashions and math, to this point, has largely been one among accuracy. To be honest, LLMs like those who energy ChatGPT and Anthropic’s Claude are higher at math issues than anybody anticipated, and so they have improved with new iterations. However they’re not excellent.

“In the event you go to ChatGPT and ask it to show a theorem, it spits out a textual content,” Riehl says. It would sound good and look good and use appropriate phrases, she says. “However there’s nothing in the way in which that enormous language fashions are designed to ensure that [it’s] appropriate.” That’s as a result of they’re designed to answer queries utilizing likelihood and usually are not prioritizing accuracy. And even whether it is 99 % appropriate, she says, that’s not ok for a math proof.

When mixed with a theorem prover like Lean, although, LLMs get significantly better.

Final July, the AI firm Harmonic made headlines after its program Aristotle, which makes use of Lean to confirm and refine its work, scored excessive sufficient for a gold medal, the best prize, within the annual Worldwide Mathematical Olympiad. Throughout this two-day occasion, individuals, all beneath the age of 20, work by way of six exceptionally troublesome issues. Greater than 600 human contestants entered the 2025 contest held in Queensland, Australia; 72 scored no less than 35 out of a potential 42 factors, incomes a gold medal. Along with Aristotle, AI packages utilized by Google and OpenAI equally carried out gold medal–degree work.

Some mathematicians didn’t see the olympiad accomplishments as exhibiting something significant about the way in which math is definitely executed. However extra attention-grabbing outcomes quickly emerged. In July, Rutgers’ Kontorovich and Terence Tao, a UCLA mathematician and Fields Medalist, introduced that progress on their 18-month effort to formalize one thing known as the sturdy prime quantity theorem had slowed. However then in September, an organization known as Math, Inc., supported by a grant from the DARPA expMath challenge, introduced that it had used its program, known as Gauss, to complete the duty in simply three weeks.

Gauss mixed Lean with AI language fashions to autoformalize the rest of the proof — that’s, the AI program translated definitions and arguments into Lean, which checked the whole argument for accuracy. Extra lately, in January, researchers reported utilizing Aristotle and GPT-5.2 to generate, formalize and confirm a proof of an issue posed by prolific Hungarian mathematician Paul Erdős in 1975. That is the newest in a latest string of proofs of Erdős issues that used AI indirectly.

To this point, Buzzard greets advances like these with skepticism. Proper now, there aren’t any guardrails, he says. And despite the fact that Lean reviews that AI-generated code is correct, it might not truly characterize the theory that the mathematician thought they have been proving.

On the identical time, Buzzard admits that the image may change shortly given the fast velocity of AI development. To this point, he hasn’t seen any AI advances that might assist him in his work. However he permits that it’s potential in 5 years that some instrument may emerge that might make quick work of formalizing the proof of Fermat’s final theorem. “I do ponder whether autoformalization will get to the purpose the place it should simply, you understand, have the ability to eat the literature,” Buzzard says.

Serving to people

Many mathematicians predict that people will at all times be essential in math, however due to the usage of AI and formalization, their position may change dramatically.

“The issue-solving facet of arithmetic will principally vanish,” says mathematician and laptop scientist Christian Szegedy of Math, Inc. He beforehand helped develop Google DeepMind’s AlphaProof program and co-led the Elon Musk–based firm xAI. The brand new job of people in math, he says, will probably be “to steer the exploration of arithmetic to the areas that we truly care about,” reasonably than muddling by way of the logic and advantageous particulars of a proof. He sees the rise of AI-driven autoformalization as a means towards making a digital, good assistant.

Szegedy thinks actual progress will probably be marked by AI’s potential to cause in new and artistic methods. He predicts that AI techniques will obtain “superhuman intelligence” in math — with the ability to resolve issues that people can’t — this yr. To this point, that hasn’t occurred.

Szegedy additionally predicts that in some unspecified time in the future, AI fashions will probably be higher at formalizing proofs than people, which doesn’t appear out of attain given the quick tempo of improvement in 2025. Quickly, he thinks, the fashions will have the ability to create a proof from scratch. “After which, the sport is over.” He doesn’t suppose people will probably be out of the sport; he signifies that the important position of the mathematician will probably be purely inventive, counting on an AI collaborator to work out the main points.

DARPA’s Shafto, who leads the expMath challenge, sees the adjustments as giving mathematicians extra time and house to consider concepts reasonably than particulars. “In the event you speak to mathematicians, after all, sure, they show issues and need them to be appropriate, however that’s not what they’re doing more often than not,” he says. “They’re speaking about concepts and the way they relate and what would possibly work. A lot of them can be completely satisfied to have a scholar or collaborator whom they might belief to type of show their tiny lemmas for them.”

Others within the subject, although, eye the approaching AI wave with skepticism and concern for the longer term. “A lot of my colleagues have completely little interest in it,” says mathematician Aravind Asok on the College of Southern California in Los Angeles.

Lately, Asok says, AI corporations have recast mathematical accomplishment as a instrument of legitimization. Math itself, he says, turns into an issue to be solved. He finds that notion misguided and “a whole misapprehension of what arithmetic is.” The insistences that math may be solved by the talents of AI fashions, or that the first aim is accuracy, require a slender view of the sector.

However it’s a view that has already infiltrated his classroom: Asok says he now not assigns homework as a result of too a lot of his graduate college students use AI to generate solutions. That defeats the aim. “They should wrestle and have interaction with [the work] in a method to actually construct up their very own intuitions,” he says. However it’s a lot quicker to ask ChatGPT.

Asok worries that conversations round AI and math focus too carefully on correctness. That’s essential, he says, “however making errors is a part of studying.” There have been loads of errors, he provides, which have helped the sector of analysis arithmetic transfer ahead.

Formalization is a robust instrument that might assist push math in attention-grabbing instructions, however Asok worries that if college students be taught math as one thing to be executed with AI, then tomorrow’s mathematicians will lack the creativity wanted to search out really new frontiers. “It’s like saying that there’s just one method to have music, or just one method to speak in a dialog,” he says.

Asok additionally worries that AI could also be a risk to the career due to how progress is perceived. Mathematicians typically depend on federal funding, he says, and if the U.S. authorities adopts the narrative that math itself has been solved by AI corporations, help for brand new work and new concepts may wane. The instructing of math, he says, is perhaps offloaded to AI brokers and packages. “I really feel just like the skilled standing of mathematicians may change immensely.”

Buzzard maintains that, with or with out AI, formalization can assist deliver math and math schooling into a contemporary age. Mathematicians would profit from an interactive theorem prover with entry to verified mathematical data not solely to test their work, but additionally as a proving floor for brand new AI-generated work, partially to separate sloppy code from bona fide advances.

“I simply need to make my colleagues’ lives higher,” Buzzard says. “I’m not making an attempt to destroy them. I’m truly making an attempt to assist them.”