Public well being organizations warn that automated censorship on social media platforms endangers lives by blocking academic posts about illicit medication in circulation.

Teams such because the Australian Injecting and Illicit Drug Customers League (AIVL), Capsule Testing Australia, CanTEST, and New Zealand’s KnowYourStuffNZ report removals of crucial alerts, suspensions of accounts, and even everlasting deletions of pages and private profiles.

Automated Moderation Flags Hurt Discount Messages

Meta’s automated content material moderation programs on Fb and Instagram flag these alerts as selling drug use, stopping them from reaching at-risk audiences.

“These providers depend on social media to inform folks the place they’re, what’s in circulation and how you can keep secure,” states John Gobeil, chief government of AIVL. “If these messages are blocked, folks do not know the service exists, and so they lose the prospect to make safer choices.”

Challenges with AI and Cultural Variations

David Caldicott, medical lead at CanTEST and Capsule Testing Australia, and an emergency physician, notes that posts containing drug data are sometimes withheld, altered, or censored. “That is well being data, however sadly, the transition from a human-mediated system to an AI-mediated system signifies that it simply will get pinged and pulled,” Dr. Caldicott explains.

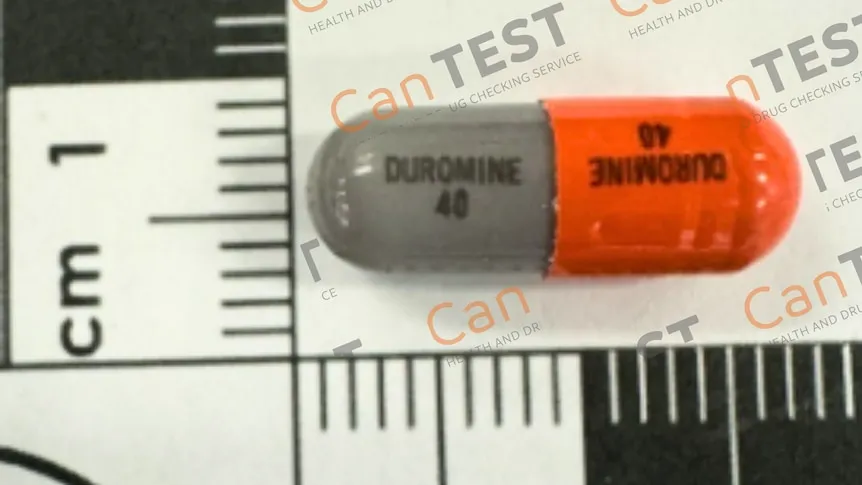

Organizations like CanTEST warn customers about harmful substances that might trigger critical sickness or loss of life and supply drug testing providers to advertise safer selections and stop fatalities. Dr. Caldicott attributes a part of the problem to differing U.S. approaches to drug training, given Meta’s American possession.

“There’s an crucial to stop any dialog about illicit medication, even when it is health-related,” Dr. Caldicott says. He provides that whereas AI exacerbates the issue, social media platforms have lagged in ethical oversight regardless of technical capabilities. “It is actually time for them to develop up and meet up with what’s now accessible to younger folks.”

Dr. Caldicott calls the interference “unacceptable,” notably since younger folks depend on social media for information and knowledge. “We’re offering health-related data to a gaggle of younger individuals who clearly require it, and folks with none well being {qualifications} are interfering with that message,” he states. With out entry to those alerts, customers might devour harmful medication uninformed, risking loss of life.

Requires Intervention and Alternate options

Dr. Caldicott urges social media corporations to have interaction with healthcare suppliers and permit well timed distribution of significant data. Affected organizations name on the e-Security Commissioner to compel Meta to revive eliminated accounts and content material associated to drug security violations.

In response, CanTEST launched the Evening Coach app, bypassing social media for consciousness and knowledge dissemination. “We have to assume extra about how we are able to have interaction with these social media corporations as a result of primarily they’re pulling all of the strings so far as the well being data is anxious on this area,” Dr. Caldicott says.

Stephanie Stephens from Instructions Well being Companies, which operates CanTEST, confirms ongoing efforts to resolve points, together with direct outreach to Meta, reposting content material, and utilizing web sites. She highlights the platform’s huge attain, with tens of hundreds of followers. In a single case, a December put up a few probably deadly opioid was eliminated simply earlier than the Cut up Milk competition in Canberra.

Ms. Stephens urges the general public to go to the CanTEST web site for alerts and drug security data.