Micron Introduces Excessive-Density Reminiscence for AI Workloads

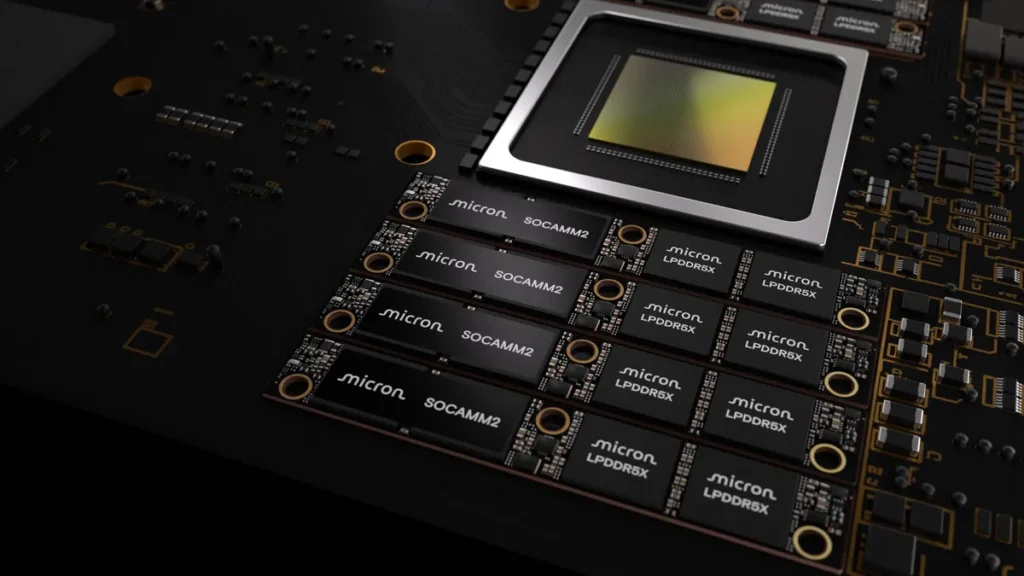

Giant language fashions (LLMs) and superior inference pipelines demand huge reminiscence swimming pools, prompting a redesign of server reminiscence architectures. Micron now presents a 256GB SOCAMM2 reminiscence module tailor-made for information facilities, prioritizing capability, bandwidth, and energy effectivity.

This module packs 64 monolithic 32GB LPDDR5x chips right into a compact LPDRAM bundle, assembly the increasing reminiscence wants of contemporary AI duties.

Boosting Server Capability to 2TB

The design expands most reminiscence per processor setup. Putting in eight SOCAMM2 modules in an eight-channel server CPU delivers 2TB of LPDRAM complete—a one-third enhance over prior 192GB modules. This helps bigger context home windows and intensive inference operations.

Energy Effectivity and Compact Design

The SOCAMM2 module outperforms conventional server reminiscence in effectivity. “Micron’s 256GB SOCAMM2 providing allows essentially the most power-efficient CPU-attached reminiscence resolution for each AI and HPC,” mentioned Raj Narasimhan, senior vp and basic supervisor of Micron’s Cloud Reminiscence Enterprise Unit. “Our continued management in low-power reminiscence options for information middle purposes has uniquely positioned us to be the primary to ship a 32Gb monolithic LPDRAM die, serving to drive business adoption of extra power-efficient, high-capacity system architectures.”

It makes use of about one-third the facility of comparable RDIMMs and takes one-third the area, enabling denser racks, decrease thermal hundreds, and diminished infrastructure prices in information facilities.

The modular SOCAMM2 helps liquid-cooled methods and eases upkeep and upgrades as AI fashions and datasets develop extra complicated.

Efficiency Features in AI Inference

For unified reminiscence setups, the module speeds key worth cache offloading, yielding over 2.3x quicker time-to-first-token in long-context inference. Standalone CPU workloads present greater than 3x higher efficiency per watt versus commonplace server modules.

Micron’s LPDRAM lineup consists of elements from 8GB to 64GB and SOCAMM2 modules from 48GB to 256GB. Buyer samples of the 256GB model are delivery now.